February 23, 2022

feature

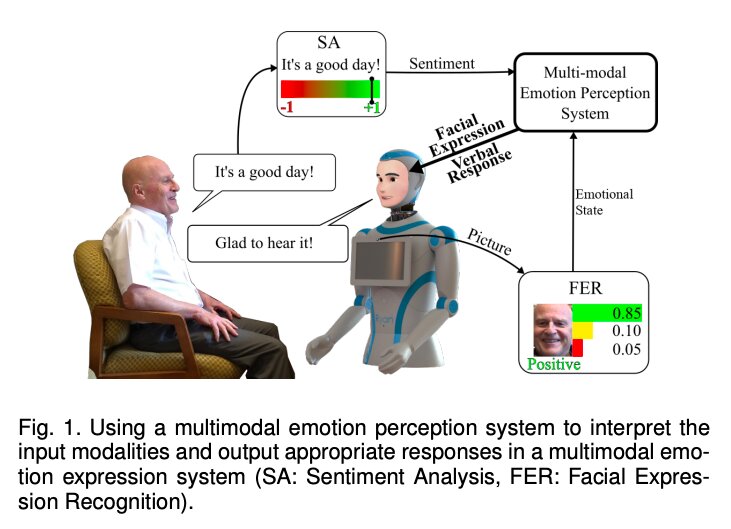

Credit: Abdollahi et al.

Socially assistive robots (SARS) are a class of robotic systems specifically designed to help vulnerable or older users to complete everyday activities. In addition to increasing their independence, these robots could stimulate users mentally and offer basic emotional support.

To support users most effectively, however, these robots should be able to engage in meaningful social interactions, identifying the emotions of users and responding appropriately to them. This could ultimately increase the users’ trust in the robots, while also promoting their emotional wellbeing.

Researchers at University of Denver, DreamFace Technologies, and University of Colorado have recently carried out a small pilot study aimed at exploring how the perceptions of older adults using socially assistive robots change depending on whether these robots have an artificial emotional intelligence or not. Their findings, published in IEEE Transactions on Affective Computingsuggest that senior adults tend to perceive robots programmed to behave more empathically as more engaging and likable.

The researchers carried out their study on 10 older adults living at Eaton Senior Communities, an independent senior care facility in Lakewood, Colorado. The participants were asked to interact with a socially assistive robot created by DreamFace Technologies called Ryan, and subsequently share their feedback and perceptions. The researchers used two different versions of the robot, one exhibiting empathic behavior and the other having no responses to a user’s emotions.

“The empathic Ryan utilizes a multimodal emotion recognition algorithm and a multimodal emotion expression system,” Hojjat Abdollahi and his colleagues explained in their paper. “Using different input modalities for emotion (i.e., facial expression and speech sentiment), the empathic Ryan detects users’ emotional state and utilizes an affective dialog manager to generate a response. On the other hand, the non-empathic Ryan lacks facial expression and uses scripted dialogs that do not factor in the users’ emotional state.”

During the researchers’ experiments, the 10 senior participants were asked to interact with Ryan twice a week and for 15 minutes, over three consecutive weeks. The participants were randomly assigned to two groups. While all participants interacted with both versions of Ryan, the order in which they interacted with them varied.

Users were asked to rate their mood on a scale of 0 to 10 both before and after they interacted with Ryan. In addition, the researchers interviewed individual participants and asked them to complete a survey after completing the study.

When they analyzed the data they collected, Abdollahi and his colleagues found that the users had benefitted from interacting with both the empathic and non-empathic social robot. Nonetheless, the feedback shared in exit surveys suggests that users perceived the empathic Ryan as more engaging and likable.

In the future, the findings gathered by this team of researchers could pave the way for new studies with bigger samples of participants, further assessing the effects of artificial emotional intelligence on how users perceive social robots. In addition, their work could inspire more roboticists to develop techniques that enhance the ability of socially assistive robots to gauge human emotions and adapt their facial expressions or interaction style accordingly.

A new model to synthesize emotional speech for companion robots

Hojjat Abdollahi et al, Artificial Emotional Intelligence in Socially Assistive Robots for Older Adults: A Pilot Study, IEEE Transactions on Affective Computing (2022). DOI: 10.1109/TAFFC.2022.3143803

© 2022 Science X Network

Citation:

Artificial emotional intelligence could change senior users’ perceptions of social robots (2022, February 23)

retrieved 23 February 2022

from https://techxplore.com/news/2022-02-artificial-emotional-intelligence-senior-users.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.