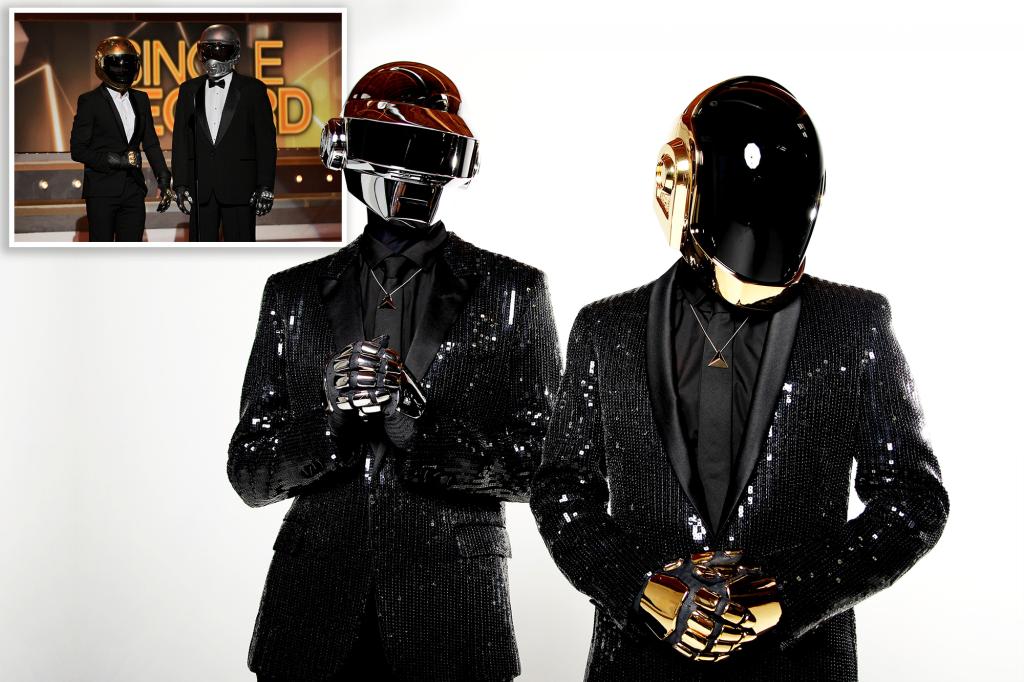

Thomas Bangalter, one of the two former members of the Grammy Award-winning music duo Daft Punk, revealed he is “terrified” of artificial intelligence — despite the pair famously performing as robots for nearly 30 years.

The 48-year-old musician ironically claimed that the increasing popularization of AI was not what Daft Punk actually stood for, despite the performers’ outward appearance.

“We tried to use these machines to express something extremely moving that a machine cannot feel, but a human can,” he explained to the BBC on Tuesday, adding that glorifying the rise of robots was not their goal.

“We were always on the side of humanity and not on the side of technology,” he said of Daft Punk, which was comprised of him and Guy-Manuel de Homem-Christo, 49, before they broke up in 2021.

“I almost consider the character of the robots like a Marina Abramović performance art installation that lasted for 20 years,” he said, referring to the eclectic Serbian artist.

The “Harder, Better, Faster, Stronger” songster — who said the duo “blurred the line between reality and fiction” — added that his concern with AI comes when it goes beyond making music and leads to “the obsolescence of man.”

“As much as I love this character, the last thing I would want to be, in the world we live in, in 2023, is a robot,” he boldly stated.

Computer-generated “deepfake” images have bamboozled the internet twice in recent weeks: once with Pope Francis’ swaggy — and phony — white puffer jacket, and unreal photos of former President Donald Trump causing a scene as he “got arrested” by the NYPD.

Last week, it was reported that an AI chatbot allegedly convinced a Belgian man to commit suicide.

The news arrived as a number of tech industry leaders called for an “immediate pause” on the training of advanced AI systems for at least six months.

But AI expert Eliezer Yudkowsky argued the proposed moratorium doesn’t go far enough.

“It took more than 60 years between when the notion of Artificial Intelligence was first proposed and studied, and for us to reach today’s capabilities,” he said. “Solving safety of superhuman intelligence — not perfect safety, safety in the sense of ‘not killing literally everyone’ — could very reasonably take at least half that long.”

:quality(70)/cloudfront-eu-central-1.images.arcpublishing.com/irishtimes/CGYPT6WE7VAO3KJ773SHUVMVBY.jpg?resize=1200,630&ssl=1)