Creating 3D content from RGB-D scans is a popular task in computer vision. A recent paper proposes to stylize the reconstructed mesh with an explicit RGB texture. For instance, it would be possible to explore the space in VR and see it painted in the style of Van Gogh.

Image credit: Matthias Niessner (still image from YouTube video)

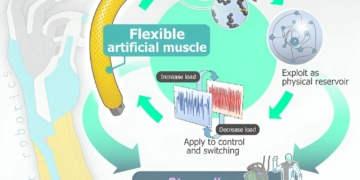

An energy minimization problem combines texture mapping with style transfer and minimizes style transfer losses. A depth-aware optimization creates equally sized stylization patterns optimized in a view-independent way. In order to create unstretched stylization patterns, the angle between the surface normal and view direction is used for the optimization.

The experiments show that the proposed method produces consistent textures and mitigates view-dependent artifacts. It outperforms state-of-the-art methods both qualitatively and quantitatively. Moreover, the explicit texture representation can be used directly with traditional rendering pipelines.

We apply style transfer on mesh reconstructions of indoor scenes. This enables VR applications like experiencing 3D environments painted in the style of a favorite artist. Style transfer typically operates on 2D images, making stylization of a mesh challenging. When optimized over a variety of poses, stylization patterns become stretched out and inconsistent in size. On the other hand, model-based 3D style transfer methods exist that allow stylization from a sparse set of images, but they require a network at inference time. To this end, we optimize an explicit texture for the reconstructed mesh of a scene and stylize it jointly from all available input images. Our depth- and angle-aware optimization leverages surface normal and depth data of the underlying mesh to create a uniform and consistent stylization for the whole scene. Our experiments show that our method creates sharp and detailed results for the complete scene without view-dependent artifacts. Through extensive ablation studies, we show that the proposed 3D awareness enables style transfer to be applied to the 3D domain of a mesh. Our method can be used to render a stylized mesh in real-time with traditional rendering pipelines.

Research paper: . Link to the article: https://arxiv.org/abs/2112.01530